23-летний разработчик из США стал первым, кто решил одну из нерешённых задач легендарного математика Пола Эрёша, используя ChatGPT 5.4 Pro. Задача, которая оставалась открытой с 1960-х годов, была решена всего за 80 минут — время, которое модель потратила на анализ и генерацию решения. Уникальность подхода заключалась в том, что ChatGPT применил хорошо известную в математике формулу, но ранее никто не использовал её для решения именно этой задачи.

По словам разработчика, он не ожидал, что модель справится с задачей с первой попытки. «Я просто задал вопрос и получил ответ, который полностью соответствовал условиям задачи. Это было невероятно», — отметил он. Диалог с ChatGPT включал подробное объяснение решения, а также подтверждение корректности подхода.

Задача Эрёша, сформулированная в середине XX века, долгое время считалась одной из самых сложных в теории графов. Её решение могло бы иметь значительные последствия для смежных областей математики и компьютерных наук. Теперь, благодаря ChatGPT, задача официально закрыта, что вызвало бурную реакцию в научном сообществе.

Эксперты отмечают, что этот случай демонстрирует растущий потенциал ИИ в решении сложных математических задач. Однако они подчёркивают, что для полноценного понимания решения всё же требуется участие человека, который сможет интерпретировать и верифицировать результаты, полученные от модели.

Разработчик уже подготовил публикацию с подробным описанием решения и отправил её в рецензируемый математический журнал. Если работа будет принята, это станет первым случаем, когда ИИ сыграл ключевую роль в доказательстве математической гипотезы.

Вот диалог с ChatGPT:

I’m treating this as a genuine extremal proof search, with two live paths: either compress primitive tails by prime-factor layers to force a universal 1+o(1)1+o(1)1+o(1) bound, or construct a counterexample beating 1 by a fixed amount.

I found a promising probabilistic route: use a “first-entry into [x,∞)[x,\infty)[x,∞)” random divisibility chain built from prime powers, weighted by Λ(q)\Lambda(q)Λ(q). Then ∑q∣nΛ(q)=logn\sum_{q\mid n}\Lambda(q)=\log n∑q∣nΛ(q)=logn converts the target sum into hitting probabilities, with only an O(1/logx)O(1/\log x)O(1/logx) boundary loss.

A competing construction now looks much less viable: because unions of disjoint prime-support blocks stay primitive, any counterexample would need to add a second large-prime block to a near-extremal Ω(n)=k\Omega(n)=kΩ(n)=k layer, but the scale mismatch makes that extra contribution too small to overcome the known deficit.

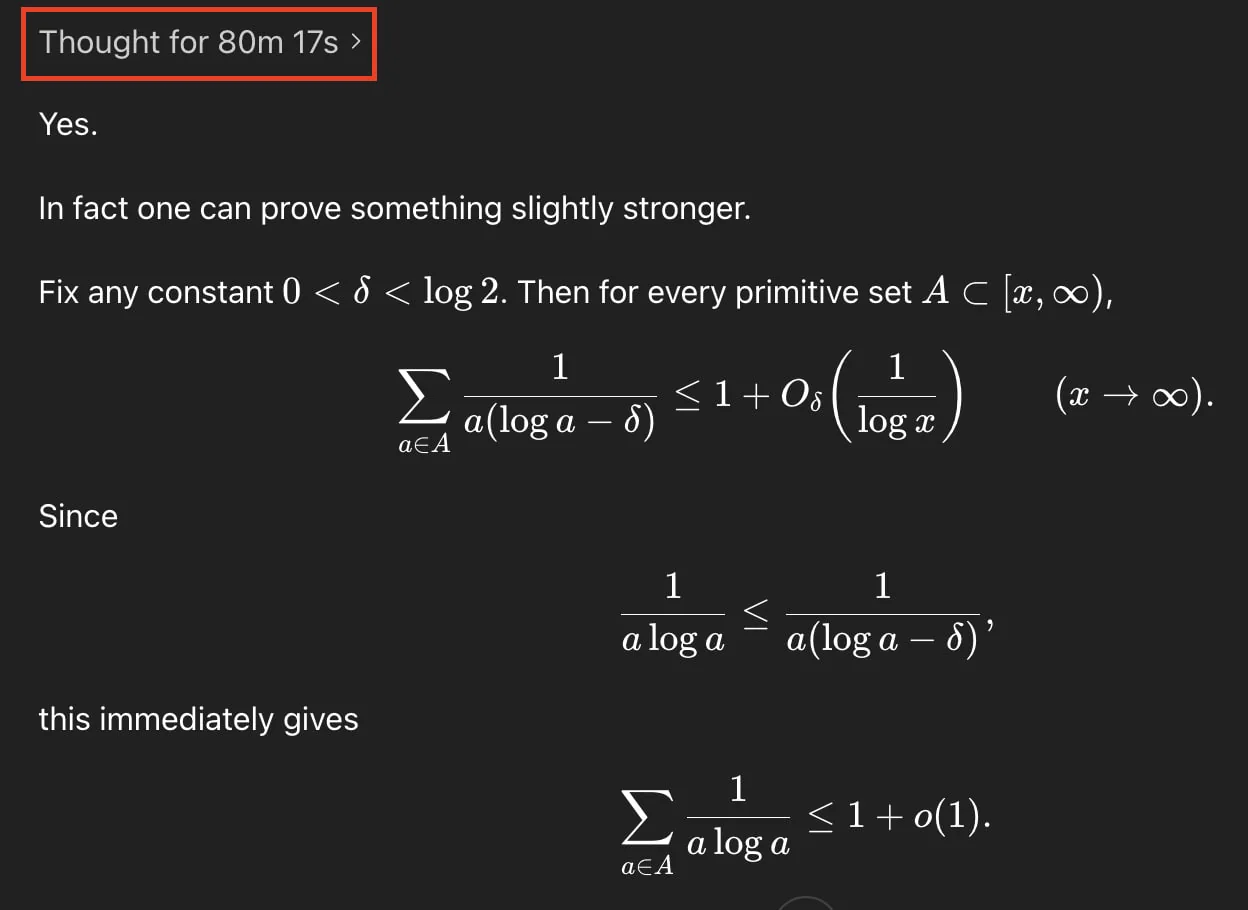

Yes.

In fact one can prove something slightly stronger.

Fix any constant 0<δ<log20<\delta<\log 20<δ<log2. Then for every primitive set A⊂[x,∞)A\subset [x,\infty)A⊂[x,∞),

∑a∈A1a(loga−δ)≤1+Oδ (1logx)(x→∞).\sum_{a\in A}\frac1{a(\log a-\delta)}\le 1+O_\delta\!\left(\frac1{\log x}\right) \qquad (x\to\infty).a∈A∑a(loga−δ)1≤1+Oδ(logx1)(x→∞).

Since

1aloga≤1a(loga−δ),\frac1{a\log a}\le \frac1{a(\log a-\delta)},aloga1≤a(loga−δ)1,

this immediately gives

∑a∈A1aloga≤1+o(1).\sum_{a\in A}\frac1{a\log a}\le 1+o(1).a∈A∑aloga1≤1+o(1).

So the statement in the problem is true.

Proof

Write Λ\LambdaΛ for the von Mangoldt function. I will sum over all integers q≥1q\ge1q≥1; only prime powers contribute because Λ(q)=0\Lambda(q)=0Λ(q)=0 otherwise.

The key identity is the exact divisor sum

∑q∣nΛ(q)=logn.\sum_{q\mid n}\Lambda(q)=\log n.q∣n∑Λ(q)=logn.

The proof is a weighted “first-entry” decomposition on the divisibility poset, but with one important twist: I only allow large prime-power jumps. The finitely many small prime powers are absorbed into the initial mass, and that costs only O(1/logx)O(1/\log x)O(1/logx).

1. A tail estimate for prime powers

Let

M(t):=∑q≤tΛ(q)q.M(t):=\sum_{q\le t}\frac{\Lambda(q)}{q}.M(t):=q≤t∑qΛ(q).

A standard unconditional estimate (Mertens’ first theorem) says

M(t)=logt+O(1).M(t)=\log t+O(1).M(t)=logt+O(1).

For m≥1m\ge1m≥1, y≥2y\ge2y≥2, set

Tδ(m,y):=∑q≥yΛ(q)q log(mq) (log(mq)−δ).T_\delta(m,y):=\sum_{q\ge y}\frac{\Lambda(q)}{q\,\log(mq)\,(\log(mq)-\delta)}.Tδ(m,y):=q≥y∑qlog(mq)(log(mq)−δ)Λ(q).

I claim that, uniformly as my→∞my\to\inftymy→∞,

Tδ(m,y)=1δloglog(my)log(my)−δ+Oδ (1log2(my)).(1)T_\delta(m,y) = \frac1\delta\log\frac{\log(my)}{\log(my)-\delta} + O_\delta\!\left(\frac1{\log^2(my)}\right). \tag{1}Tδ(m,y)=δ1loglog(my)−δlog(my)+Oδ(log2(my)1).(1)

To prove this, write L=logmL=\log mL=logm, U=logyU=\log yU=logy, and

F(u):=1(L+u)(L+u−δ).F(u):=\frac1{(L+u)(L+u-\delta)}.F(u):=(L+u)(L+u−δ)1.

Then

Tδ(m,y)=∫U∞F(u) dM(eu).T_\delta(m,y)=\int_{U}^{\infty} F(u)\,dM(e^u).Tδ(m,y)=∫U∞F(u)dM(eu).

Since M(eu)=u+E(u)M(e^u)=u+E(u)M(eu)=u+E(u) with E(u)=O(1)E(u)=O(1)E(u)=O(1), we get

Tδ(m,y)=∫U∞F(u) du+∫U∞F(u) dE(u).T_\delta(m,y)=\int_U^\infty F(u)\,du+\int_U^\infty F(u)\,dE(u).Tδ(m,y)=∫U∞F(u)du+∫U∞F(u)dE(u).

On any finite interval, EEE has bounded variation, so integration by parts gives

∫U∞F(u) dE(u)=−F(U)E(U)−∫U∞E(u)F′(u) du.\int_U^\infty F(u)\,dE(u) = -F(U)E(U)-\int_U^\infty E(u)F'(u)\,du.∫U∞F(u)dE(u)=−F(U)E(U)−∫U∞E(u)F′(u)du.

Now

F(U)≪δ1(L+U)2,F′(u)≪δ1(L+u)3,F(U)\ll_\delta \frac1{(L+U)^2}, \qquad F'(u)\ll_\delta \frac1{(L+u)^3},F(U)≪δ(L+U)21,F′(u)≪δ(L+u)31,

and E(u)=O(1)E(u)=O(1)E(u)=O(1), so

∫U∞F(u) dE(u)=Oδ (1(L+U)2).\int_U^\infty F(u)\,dE(u) = O_\delta\!\left(\frac1{(L+U)^2}\right).∫U∞F(u)dE(u)=Oδ((L+U)21).

Also

∫U∞du(L+u)(L+u−δ)=1δlogL+UL+U−δ.\int_U^\infty \frac{du}{(L+u)(L+u-\delta)} = \frac1\delta\log\frac{L+U}{L+U-\delta}.∫U∞(L+u)(L+u−δ)du=δ1logL+U−δL+U.

This is exactly (1).

2. Choose a cutoff YYY and define the jump kernel

Fix δ∈(0,log2)\delta\in(0,\log2)δ∈(0,log2).

Using (1), choose once and for all an integer Y≥2Y\ge 2Y≥2, large enough in terms of δ\deltaδ, so that for all sufficiently large mmm,

(logm−δ) Tδ(m,Y)≤1.(2)(\log m-\delta)\,T_\delta(m,Y)\le 1. \tag{2}(logm−δ)Tδ(m,Y)≤1.(2)

Why is this possible? By (1),

(logm−δ)Tδ(m,Y)=(logm−δ)(1δloglog(mY)log(mY)−δ+Oδ (1log2m)).(\log m-\delta)T_\delta(m,Y) = (\log m-\delta)\left( \frac1\delta\log\frac{\log(mY)}{\log(mY)-\delta} +O_\delta\!\left(\frac1{\log^2 m}\right) \right).(logm−δ)Tδ(m,Y)=(logm−δ)(δ1loglog(mY)−δlog(mY)+Oδ(log2m1)).

Using log(1+t)≤t\log(1+t)\le tlog(1+t)≤t,

1δloglog(mY)log(mY)−δ≤1log(mY)−δ,\frac1\delta\log\frac{\log(mY)}{\log(mY)-\delta} \le \frac1{\log(mY)-\delta},δ1loglog(mY)−δlog(mY)≤log(mY)−δ1,

so

(logm−δ)Tδ(m,Y)≤logm−δlog(mY)−δ+Oδ (1logm)=1−logYlog(mY)−δ+Oδ (1logm).(\log m-\delta)T_\delta(m,Y) \le \frac{\log m-\delta}{\log(mY)-\delta} + O_\delta\!\left(\frac1{\log m}\right) = 1-\frac{\log Y}{\log(mY)-\delta}+O_\delta\!\left(\frac1{\log m}\right).(logm−δ)Tδ(m,Y)≤log(mY)−δlogm−δ+Oδ(logm1)=1−log(mY)−δlogY+Oδ(logm1).

Since logY\log YlogY is a fixed positive constant which we may choose as large as we like, the deficit from the main term dominates the error for large mmm, giving (2).

Now fix xxx so large that (2) holds for all m≥xm\ge xm≥x.

Define

W(n):=1n(logn−δ).W(n):=\frac1{n(\log n-\delta)}.W(n):=n(logn−δ)1.

For m≥xm\ge xm≥x and q≥Yq\ge Yq≥Y, define

P(m,mq):=(logm−δ)Λ(q)q log(mq) (log(mq)−δ).P(m,mq):= \frac{(\log m-\delta)\Lambda(q)} {q\,\log(mq)\,(\log(mq)-\delta)}.P(m,mq):=qlog(mq)(log(mq)−δ)(logm−δ)Λ(q).

Because of (2), for every m≥xm\ge xm≥x,

∑q≥YP(m,mq)≤1.\sum_{q\ge Y}P(m,mq)\le 1.q≥Y∑P(m,mq)≤1.

So this is a genuine sub-Markov jump kernel on [x,∞)[x,\infty)[x,∞): from mmm, the chain either jumps to mqmqmq with the above probability, or stops.

Every jump multiplies by an integer q≥Y>1q\ge Y>1q≥Y>1, so every path is strictly increasing and each visited state divides every later one.

3. The entrance mass

Define the “source” mass on integers n≥xn\ge xn≥x by

bx(n):=1nlogn(logn−δ)(∑q∣nq<YΛ(q)+∑q∣nq≥Yn/q<xΛ(q)).(3)b_x(n):= \frac1{n\log n(\log n-\delta)} \left( \sum_{\substack{q\mid n\\ q<Y}}\Lambda(q) + \sum_{\substack{q\mid n\\ q\ge Y\\ n/q<x}}\Lambda(q) \right). \tag{3}bx(n):=nlogn(logn−δ)1q∣nq<Y∑Λ(q)+q∣nq≥Yn/q<x∑Λ(q).(3)

This means:

- all small prime-power divisors q<Yq<Yq<Y are inserted directly at nnn, rather than represented by transitions;

- among the large prime powers q≥Yq\ge Yq≥Y, those whose parent n/qn/qn/q lies below xxx are also inserted directly at nnn.

Let

Bx:=∑n≥xbx(n).B_x:=\sum_{n\ge x} b_x(n).Bx:=n≥x∑bx(n).

We will show

Bx=1+Oδ (1logx).(4)B_x=1+O_\delta\!\left(\frac1{\log x}\right). \tag{4}Bx=1+Oδ(logx1).(4)

First assume this and finish the main argument.

Start the sub-Markov chain with initial distribution

P(N0=n)=bx(n)Bx.\mathbb P(N_0=n)=\frac{b_x(n)}{B_x}.P(N0=n)=Bxbx(n).

Let v(n)v(n)v(n) be the probability that the chain ever visits nnn.

Because the chain is increasing, the only ways to hit nnn are:

- start at nnn, or

- come from n/qn/qn/q via one last jump with q≥Yq\ge Yq≥Y.

So

v(n)=bx(n)Bx+∑q∣nq≥Yn/q≥xv(n/q) P(n/q,n).(5)v(n)=\frac{b_x(n)}{B_x} + \sum_{\substack{q\mid n\\ q\ge Y\\ n/q\ge x}} v(n/q)\,P(n/q,n). \tag{5}v(n)=Bxbx(n)+q∣nq≥Yn/q≥x∑v(n/q)P(n/q,n).(5)

Now I claim that

v(n)=W(n)Bx(n≥x).(6)v(n)=\frac{W(n)}{B_x}\qquad(n\ge x). \tag{6}v(n)=BxW(n)(n≥x).(6)

This is proved by induction on nnn. If it holds for all smaller integers, then from (5),

v(n)=1Bx(bx(n)+∑q∣nq≥Yn/q≥xW(n/q) P(n/q,n)).v(n)=\frac1{B_x}\left( b_x(n)+ \sum_{\substack{q\mid n\\ q\ge Y\\ n/q\ge x}} W(n/q)\,P(n/q,n) \right).v(n)=Bx1bx(n)+q∣nq≥Yn/q≥x∑W(n/q)P(n/q,n).

But

W(n/q) P(n/q,n)=1(n/q)(log(n/q)−δ)⋅(log(n/q)−δ)Λ(q)qlogn(logn−δ)=Λ(q)nlogn(logn−δ).W(n/q)\,P(n/q,n) = \frac{1}{(n/q)(\log(n/q)-\delta)} \cdot \frac{(\log(n/q)-\delta)\Lambda(q)} {q\log n(\log n-\delta)} = \frac{\Lambda(q)}{n\log n(\log n-\delta)}.W(n/q)P(n/q,n)=(n/q)(log(n/q)−δ)1⋅qlogn(logn−δ)(log(n/q)−δ)Λ(q)=nlogn(logn−δ)Λ(q).

Hence

bx(n)+∑q∣nq≥Yn/q≥xW(n/q) P(n/q,n)=1nlogn(logn−δ)∑q∣nΛ(q)=1n(logn−δ)=W(n),b_x(n)+ \sum_{\substack{q\mid n\\ q\ge Y\\ n/q\ge x}} W(n/q)\,P(n/q,n) = \frac1{n\log n(\log n-\delta)} \sum_{q\mid n}\Lambda(q) = \frac1{n(\log n-\delta)} = W(n),bx(n)+q∣nq≥Yn/q≥x∑W(n/q)P(n/q,n)=nlogn(logn−δ)1q∣n∑Λ(q)=n(logn−δ)1=W(n),

because the two sums in bx(n)b_x(n)bx(n) together with the transition sum partition all divisors q∣nq\mid nq∣n. Thus (6) holds.

Now let A⊂[x,∞)A\subset[x,\infty)A⊂[x,∞) be primitive. A sample path cannot hit two distinct elements of AAA, because along the path each earlier state divides each later state. Therefore

∑a∈Av(a)≤1.\sum_{a\in A} v(a)\le 1.a∈A∑v(a)≤1.

Using (6),

∑a∈A1a(loga−δ)=∑a∈AW(a)=Bx∑a∈Av(a)≤Bx.\sum_{a\in A}\frac1{a(\log a-\delta)} = \sum_{a\in A}W(a) = B_x\sum_{a\in A}v(a) \le B_x.a∈A∑a(loga−δ)1=a∈A∑W(a)=Bxa∈A∑v(a)≤Bx.

So by (4),

∑a∈A1a(loga−δ)≤1+Oδ (1logx).\sum_{a\in A}\frac1{a(\log a-\delta)} \le 1+O_\delta\!\left(\frac1{\log x}\right).a∈A∑a(loga−δ)1≤1+Oδ(logx1).

Finally,

∑a∈A1aloga≤∑a∈A1a(loga−δ)≤1+Oδ (1logx)=1+o(1).\sum_{a\in A}\frac1{a\log a} \le \sum_{a\in A}\frac1{a(\log a-\delta)} \le 1+O_\delta\!\left(\frac1{\log x}\right) = 1+o(1).a∈A∑aloga1≤a∈A∑a(loga−δ)1≤1+Oδ(logx1)=1+o(1).

This proves the theorem, assuming (4).

4. Estimating the total entrance mass BxB_xBx

Split BxB_xBx according to the two terms in (3):

Bx=Bxsmall+Bxent.B_x=B_x^{\mathrm{small}}+B_x^{\mathrm{ent}}.Bx=Bxsmall+Bxent.

The small-qqq part

We have

Bxsmall=∑q<YΛ(q)q∑m≥x/q1mlog(mq)(log(mq)−δ).B_x^{\mathrm{small}} = \sum_{q<Y}\frac{\Lambda(q)}{q} \sum_{m\ge x/q} \frac1{m\log(mq)(\log(mq)-\delta)}.Bxsmall=q<Y∑qΛ(q)m≥x/q∑mlog(mq)(log(mq)−δ)1.

For each fixed q<Yq<Yq<Y, the inner summand is decreasing in mmm, so by comparison with the integral,

∑m≥x/q1mlog(mq)(log(mq)−δ)≪δ∫x/q∞dttlog(tq)(log(tq)−δ).\sum_{m\ge x/q} \frac1{m\log(mq)(\log(mq)-\delta)} \ll_\delta \int_{x/q}^{\infty} \frac{dt}{t\log(tq)(\log(tq)-\delta)}.m≥x/q∑mlog(mq)(log(mq)−δ)1≪δ∫x/q∞tlog(tq)(log(tq)−δ)dt.

Substitute u=log(tq)u=\log(tq)u=log(tq), dt/t=dudt/t=dudt/t=du. Since t=x/qt=x/qt=x/q gives u=logxu=\log xu=logx,

∫x/q∞dttlog(tq)(log(tq)−δ)=1δloglogxlogx−δ=Oδ (1logx).\int_{x/q}^{\infty} \frac{dt}{t\log(tq)(\log(tq)-\delta)} = \frac1\delta\log\frac{\log x}{\log x-\delta} = O_\delta\!\left(\frac1{\log x}\right).∫x/q∞tlog(tq)(log(tq)−δ)dt=δ1loglogx−δlogx=Oδ(logx1).

Because YYY is fixed,

Bxsmall=Oδ (1logx).(7)B_x^{\mathrm{small}} = O_\delta\!\left(\frac1{\log x}\right). \tag{7}Bxsmall=Oδ(logx1).(7)

The first-entry part

Now

Bxent=∑m<x1m∑q≥max(Y,x/m)Λ(q)qlog(mq)(log(mq)−δ).B_x^{\mathrm{ent}} = \sum_{m<x}\frac1m \sum_{q\ge \max(Y,x/m)} \frac{\Lambda(q)}{q\log(mq)(\log(mq)-\delta)}.Bxent=m<x∑m1q≥max(Y,x/m)∑qlog(mq)(log(mq)−δ)Λ(q).

Apply the tail estimate (1) with y=max(Y,x/m)y=\max(Y,x/m)y=max(Y,x/m). Then

m y=max(mY,x),m\,y=\max(mY,x),my=max(mY,x),

so if we put

Mm:=log(m y)=max(log(mY),logx),M_m:=\log(m\,y)=\max(\log(mY),\log x),Mm:=log(my)=max(log(mY),logx),

we have

Mm∈[logx, logx+logY].M_m\in[\log x,\ \log x+\log Y].Mm∈[logx, logx+logY].

Therefore, uniformly in m<xm<xm<x,

∑q≥max(Y,x/m)Λ(q)qlog(mq)(log(mq)−δ)=1δlogMmMm−δ+Oδ (1Mm2)=1logx+Oδ (1log2x).\sum_{q\ge \max(Y,x/m)} \frac{\Lambda(q)}{q\log(mq)(\log(mq)-\delta)} = \frac1\delta\log\frac{M_m}{M_m-\delta} + O_\delta\!\left(\frac1{M_m^2}\right) = \frac1{\log x}+O_\delta\!\left(\frac1{\log^2 x}\right).q≥max(Y,x/m)∑qlog(mq)(log(mq)−δ)Λ(q)=δ1logMm−δMm+Oδ(Mm21)=logx1+Oδ(log2x1).

Hence

Bxent=(1logx+Oδ (1log2x))∑m<x1m.B_x^{\mathrm{ent}} = \left(\frac1{\log x}+O_\delta\!\left(\frac1{\log^2 x}\right)\right) \sum_{m<x}\frac1m.Bxent=(logx1+Oδ(log2x1))m<x∑m1.

Since ∑m<x1/m=logx+O(1)\sum_{m<x}1/m=\log x+O(1)∑m<x1/m=logx+O(1),

Bxent=1+Oδ (1logx).(8)B_x^{\mathrm{ent}} = 1+O_\delta\!\left(\frac1{\log x}\right). \tag{8}Bxent=1+Oδ(logx1).(8)

From (7) and (8),

Bx=1+Oδ (1logx),B_x=1+O_\delta\!\left(\frac1{\log x}\right),Bx=1+Oδ(logx1),

which is exactly (4).

The proof is complete. ∎

Conclusion

So the answer to your problem is yes:

supA⊂[x,∞)A primitive∑a∈A1aloga≤1+o(1).\sup_{\substack{A\subset [x,\infty)\\ A\ \text{primitive}}} \sum_{a\in A}\frac1{a\log a} \le 1+o(1).A⊂[x,∞)A primitivesupa∈A∑aloga1≤1+o(1).

The argument actually gives the stronger weighted form

∑a∈A1a(loga−δ)≤1+Oδ (1logx)\sum_{a\in A}\frac1{a(\log a-\delta)}\le 1+O_\delta\!\left(\frac1{\log x}\right)a∈A∑a(loga−δ)1≤1+Oδ(logx1)

for every fixed 0<δ<log20<\delta<\log20<δ<log2.

This is also sharp at the constant level, since the usual kkk-almost-prime examples already show that the extremal value approaches 111.

I’m checking the argument carefully before I typeset it, especially the normalization and the tail estimate, so the paper version is fully watertight.

I’m also stress-testing the cutoff and sub-Markov construction, including boundary terms, to ensure the first-entry decomposition gives an exact 1+O(1/logx)1+O(1/\log x)1+O(1/logx) majorant and the weight recursion closes correctly.

I’ve now shaped the core argument into a paper draft, and I’m polishing the prime-power Mertens lemma so that the analytic input is stated cleanly and proved in a publishable form.

Автор

-

Сергей Сергеев — практикующий трейдер и автор FinTerminal. С 2013 года работает с Forex, криптовалютами и акциями, анализируя рыночные риски, волатильность, исполнение сделок и поведение цены в новостные периоды. В материалах сочетает собственный торговый опыт, статистику рынков и проверку условий брокеров для частных трейдеров и инвесторов. При подготовке публикаций опирается на данные регуляторов, рыночную статистику, биржевые котировки и публичные условия брокеров, отдельно отмечая риски, ограничения и спорные моменты, которые важно проверить перед принятием решения.